Streamline Your Content Tracking with Python's RSS Parsing

Discover how Python's RSS parsing tools simplify content tracking, saving you time and keeping you effortlessly informed.

Tired of manually checking multiple websites for the latest updates? Simplify your information gathering with the power of Python and RSS parsing. In this guide, we'll explore how to effortlessly extract and organize content from RSS feeds. Discover how to stay updated on your favorite news sources, blogs, and other online resources, saving you time and keeping you informed.

Python's RSS Powerhouse: The feedparser Library

If you're ready to start parsing RSS feeds with Python, the feedparser library will be your best friend. It simplifies the process of reading and interpreting RSS feeds, making your code clear and easy to maintain.

Installation

Before we dive into parsing RSS feeds, let's get the feedparser library set up. Python comes with a handy tool called pip. This package manager makes it incredibly easy to install and manage the libraries you need for your projects.

To install feedparser, open your terminal or command prompt and run the following command:

pip install feedparserThis command tells pip to download and install the feedparser library, along with any other libraries it might depend on to function.

Basic Usage

Now that you have feedparser ready, let's parse a real RSS feed. Here's a simple Python code snippet:

import feedparser

rss_url = "https://your-favorite-website.com/rss" # Replace with the actual RSS URL

feed = feedparser.parse(rss_url)

# Access the overall feed title

print(feed.feed.title)

# Print titles of the first few entries

for entry in feed.entries[:3]:

print(entry.title) Finding RSS URLs

Most websites that provide RSS feeds will have a small RSS icon (usually orange) or links labeled "RSS" or "Feed". If you're unsure, you can try adding "/rss" or "/feed" to the end of the website's main address.

Understanding the Structure

RSS feeds have a hierarchical structure:

- The

feedobject: Contains overall information about the feed itself, like its title, website link, and description. entries: A list of individual items within the feed. Think of each entry like a blog post or news article, having its own title, link, publication date, etc.

Accessing Data

The feedparser library parses the RSS feed and neatly organizes the information it contains into the feed object. Let's see how to access some core elements:

- The Importance of Titles: The title of each entry is usually the easiest way to identify what the content is about. In our example, we simply print the titles.

- Exploring Further: RSS feeds hold a wealth of data beyond just titles. Experiment with accessing other elements within the

feedandentryobjects. Here are a few common ones:entry.link: The URL to the full article or webpage.entry.published: The publication date of the entry.entry.summary: A short summary or description (if provided).entry.author: The author of the content (if available).

Note: The availability of specific data points can vary between different RSS feeds.

Important Note: Not all websites offer RSS feeds. Look for an RSS symbol or links mentioning "RSS" to find a website's feed URL.

Unlocking Feed Details

RSS feeds, due to their standardized structure, provide a consistent way to access the essential information you need. Let's delve into the common elements you'll encounter at both the channel (overall feed) level and the individual entry level.

Channel-Level Information

The feed object contains metadata about the entire RSS feed itself:

feed.title: The primary title of the feed. This is usually the name of the website, blog, or content source.feed.link: The main URL of the website associated with the RSS feed. This can be helpful for navigating directly to the source of the content.feed.description: A short summary providing an overview of the type of content you can expect within the feed.feed.updated: The date and time the feed itself was last updated. Note that this might differ from the publication dates of individual entries.

Entry-Level Information

Each entry within the feed.entries list represents a single item within the feed, whether that's a blog post, news article, podcast episode, or other content format. Here's what you'll commonly find:

entry.title: The title of the specific item.entry.link: The direct URL to the full content.entry.published: The date and time the entry was originally published.entry.summaryorentry.description: Depending on how the feed's creator has configured it, you might find either a short snippet or the full textual content of the item.entry.author: The author or creator of the content, if this information is provided.

first_entry = feed.entries[0]

print("Title:", first_entry.title)

print("Link:", first_entry.link)

print("Published:", first_entry.published)

# Check if a full description or only a summary is available

if "description" in first_entry:

print("Full Content:", first_entry.description)

else:

print("Summary:", first_entry.summary)Example: Accessing Entry Details

Beyond the Basics

Keep in mind that some RSS feeds may include additional data points or utilize custom namespaces to provide specialized information. feedparser gives you the tools to inspect the full structure of a feed. Don't be afraid to experiment and see what other interesting data you might be able to extract!

Absolutely! Here's section 3, focusing on practical applications you can build with your RSS parsing skills.

Practical Applications

Now that you understand how to extract data from RSS feeds, let's put that knowledge to work! Here are some common use cases for Python-powered RSS parsing:

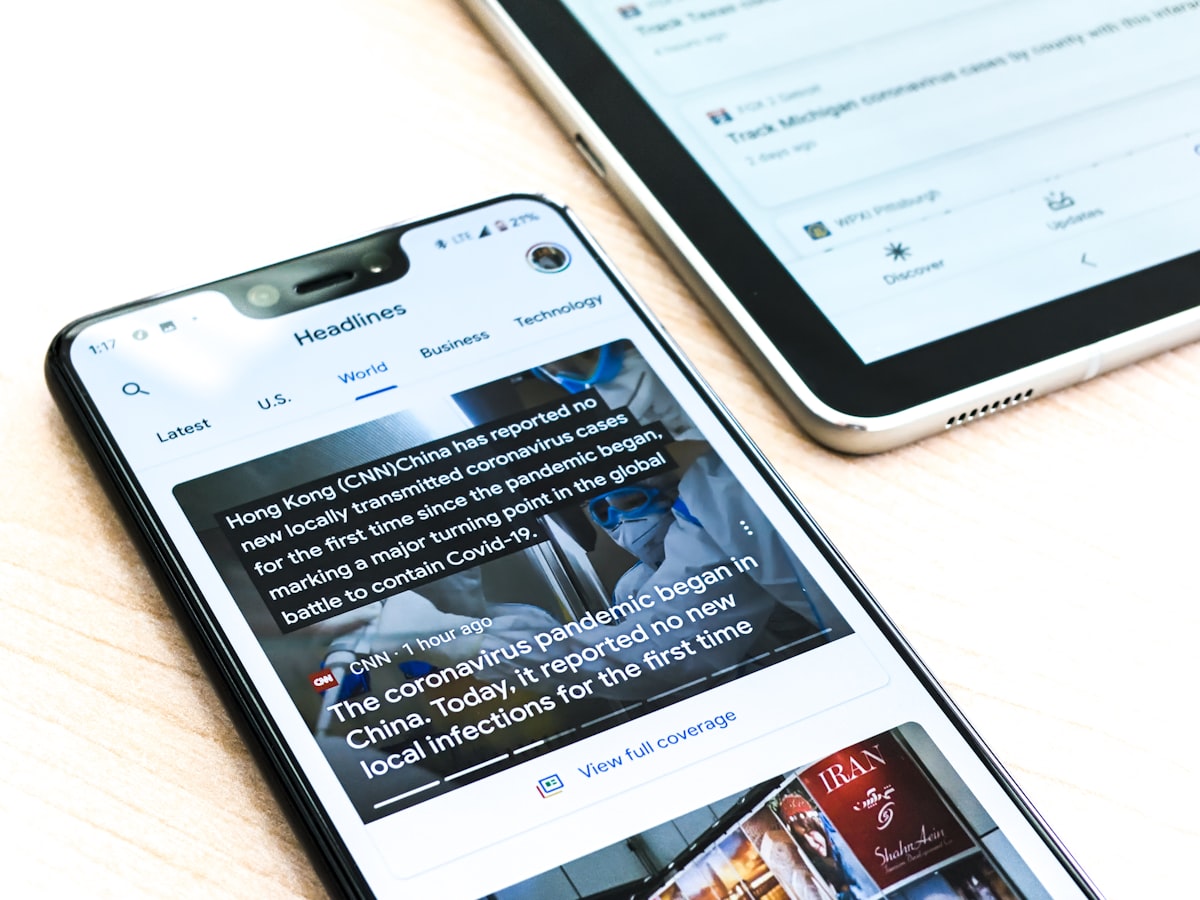

Simple News Aggregator

- How it Works:

- Fetch RSS feeds from several of your favorite news sites or blogs.

- Display the latest headlines in a consolidated format, organized by publication date or source.

- Consider adding a user interface (basic command-line, or build a small web app using Flask or Django).

Content Monitoring

- Use Case: Track specific keywords or topics across multiple websites.

- Implementation:

- Define your target keywords.

- Parse relevant RSS feeds, checking the title, description, and content of each entry.

- Set up notifications (email, on-screen alerts, etc.) when your keywords are found.

Data Transformation

- Beyond Just Displaying: RSS data doesn't have to stay in RSS format.

- Convert to JSON: Create structured data usable in other web applications.

- Populate a CSV: Prepare the data for spreadsheets or further analysis.

- Feed into Databases: Store content from RSS feeds in a database for archival or search purposes.

target_keywords = ["Python", "artificial intelligence", "web development"]

for entry in feed.entries:

if any(keyword.lower() in entry.title.lower() for keyword in target_keywords):

print("Match Found! Title:", entry.title)

print("Link:", entry.link)

Example: Keyword Monitoring Snippet

Tips

- Respect Sources: Be mindful of the frequency at which you fetch feeds. Avoid overloading websites with too many requests.

- Web Scraping Alternative: If a website doesn't provide an RSS feed, web scraping (with libraries like Beautiful Soup) might be an option, but always check the website's terms of service.

Advanced Considerations

Depending on the complexity of your RSS parsing projects, these techniques can prove valuable:

- Conditional Updates

- Focus on the New: Reduce unnecessary requests and processing by fetching only entries published since your last check.

- Utilizing Timestamps: Most RSS feeds include publication timestamps (

entry.published). Store the timestamp of the most recent entry you fetched and use it to fetch only newer items. - ETag and Last-Modified: Some feeds support HTTP headers for change tracking. Learn about these headers for even more efficient updates.

- Asynchronous Fetching

- Speed Boost for Multiple Feeds: If you're fetching data from many RSS feeds, using libraries like

aiohttpand Python'sasynciocan significantly improve performance by making requests concurrently.

- Speed Boost for Multiple Feeds: If you're fetching data from many RSS feeds, using libraries like

- Custom Feed Creation

- Sharing Your Work: While not directly parsing-related, Python also allows you to generate RSS feeds. This is useful if you want to create a feed for your own blog, curate content, or syndicate information for others.

import time

# ... (Your code to fetch an RSS feed)

last_fetch_timestamp = fetch_timestamp_from_storage() # Load the timestamp of your previous fetch

for entry in feed.entries:

entry_timestamp = time.mktime(entry.published_parsed) # Convert entry's time to a comparable format

if entry_timestamp > last_fetch_timestamp:

# Process the new entry

update_timestamp_storage(time.time()) # Store the current timestamp for the next run

Example: Fetching New Entries Based on Timestamp

Important Note: The availability of ETag/Last-Modified support and the best way to store timestamps might depend on whether you're building a simple script or a more complex application.

Beyond feedparser

While feedparser is a fantastic and versatile tool, it's worth knowing that other Python libraries for RSS parsing exist:

- Built-in

xml.etree.ElementTree: Included in Python's standard library. Offers very granular control over parsing XML data (which RSS is based on), but can be less beginner-friendly thanfeedparser. - Third-Party Options: Various other libraries might provide specialized features or slightly different approaches to parsing RSS. Consider exploring these if you have very specific requirements.

import xml.etree.ElementTree as ET

rss_url = "https://your-favorite-website.com/rss" # Replace with the actual RSS URL

# Fetch the RSS content (you'd likely use a library like 'requests' for this in a real application)

rss_data = """

... (Raw RSS content as a string)

... """

root = ET.fromstring(rss_data)

# Access overall feed title

print("Feed Title:", root.find('channel/title').text)

# Print titles of a few entries

for item in root.findall('channel/item'):

print(item.find('title').text)Example: Parsing RSS with xml.etree.ElementTree

Explanation

- Import: We import the

xml.etree.ElementTreemodule asET. - Data: For this simplified example, assume

rss_datacontains the raw text of an RSS feed. In reality, you'd likely fetch it using a library likerequests. - Parsing:

ET.fromstring()converts the XML data into a tree-like structure. - Navigation: We use

find()andfindall()methods to traverse the tree:channel/titletargets the feed's title.channel/itemfinds all entry elements.

.text: Accesses the textual content of the found elements.

Caveats

- Error Handling: A production-ready parser would need more robust error handling than this simple example (e.g., for malformed XML).

- Flexibility:

feedparseroften abstracts away some of the complexities of different RSS variations, making it more beginner-friendly overall.

A Note on Responsible RSS Usage

- Respect Rate Limits: Avoid overwhelming websites with too many requests. Space out your feed fetching, especially if you're dealing with smaller sites.

- Consider Caching: If you need to fetch data frequently, store a local copy of the feed and only update it after a reasonable interval.

- Give Attribution: If you're republishing or displaying content from RSS feeds, make sure to provide clear credit to the original source.

People Also Ask

- Can I use Python to parse RSS feeds from social media? Not directly. Social media platforms typically have their own APIs, which are different from RSS. You might need to use libraries specifically designed to interact with those APIs.

- What's the difference between RSS and Atom feeds? Both are designed for content syndication. Atom is a slightly more modern format with a few additional features, but

feedparsercan handle both RSS and Atom feeds. - Is RSS still relevant? While not as universally used as it once was, RSS remains valuable for staying updated on content without relying on the algorithms of social media platforms or centralized news aggregators. It's still used by many blogs, news sources, and podcasts.

- Do I need to know XML to parse RSS feeds? Not deeply. Libraries like

feedparserhide most of the XML complexities. However, a basic understanding of XML structure can be helpful if you encounter unusual feeds or need to use more advanced techniques.

Important Links

Libraries

- feedparser Documentation: The essential reference for getting the most out of the

feedparserlibrary. https://pythonhosted.org/feedparser/ - Python's xml module: Learn more about the built-in

xml.etree.ElementTreemodule: https://docs.python.org/3/library/xml.etree.elementtree.html

Understanding RSS

- RSS 2.0 Specification: For those wanting a deeper dive into the technical details of RSS: https://validator.w3.org/feed/docs/rss2.html

- The History of RSS: A bit of context on the evolution of RSS: https://cyber.harvard.edu/rss/rss.html